Data preprocessing may not have the glamour of cutting-edge algorithms or sleek user interfaces, but it’s the unsung hero behind the scenes that transforms raw data into actionable insights and exceptional user experiences.

Table of contents:

- Understanding Data Preprocessing

- Data Preprocessing in Machine Learning

- Data Preprocessing for API Development

- Data Preprocessing in Mobile Applications

- Challenges in Data Preprocessing

- Tools and Libraries for Data Preprocessing

- Data Preprocessing Workflow

- Data Preprocessing Best Practices

- Impact on Model Performance

- Real-Life Examples

- FAQs

Understanding Data Preprocessing

Data preprocessing is the art of refining and shaping data so that it becomes more valuable and meaningful. Imagine you have a dataset with customer reviews. Data preprocessing involves tasks like removing duplicate reviews, correcting spelling errors, and extracting sentiment scores. This ensures your data is clean and ready for analysis.

Data Preprocessing in Machine Learning

In the world of machine learning, data is the fuel that powers models. Imagine you’re building a spam email classifier. Data preprocessing involves not only cleaning your dataset but also transforming text data into numerical features, like word counts or TF-IDF scores. This enables your machine learning algorithm to learn from the data effectively. For example, after data preprocessing, your spam email classifier’s accuracy might jump from 85% to 95%, thanks to the removal of noisy data and the transformation of text into a usable format.

Data Preprocessing for API Development

APIs are like digital messengers, delivering data to different applications. Let’s take a weather API as an example. Before delivering weather forecasts to your smartphone, the API processes data from various weather stations. Data preprocessing ensures that the temperatures are in the same unit, the dates are formatted correctly, and any missing data is interpolated. This guarantees that when you check the weather on your phone, you receive accurate and consistent information.

Data Preprocessing in Mobile Applications

Mobile apps have become integral to our daily lives. Think about a ride-sharing app. To provide you with an estimated arrival time, the app needs to process real-time location data, traffic information, and user preferences. Data preprocessing is what makes sure this complex data is handled seamlessly, providing you with an accurate and hassle-free experience. Imagine the frustration if your ride-sharing app constantly gave incorrect arrival times due to poorly processed data!

Challenges in Data Preprocessing

Data preprocessing isn’t always smooth sailing. One common challenge is dealing with missing data. Let’s say you’re analysing sales data, and some sales records have missing values for the product price. Data preprocessing techniques like imputation can fill in these gaps with reasonable estimates, ensuring your analysis isn’t skewed by missing information. Another challenge is outlier detection. For instance, in a dataset of customer ages, an entry claiming someone is 150 years old might be an error. Outliers like these can significantly affect analysis results. Robust data preprocessing techniques can help identify and handle such outliers effectively.

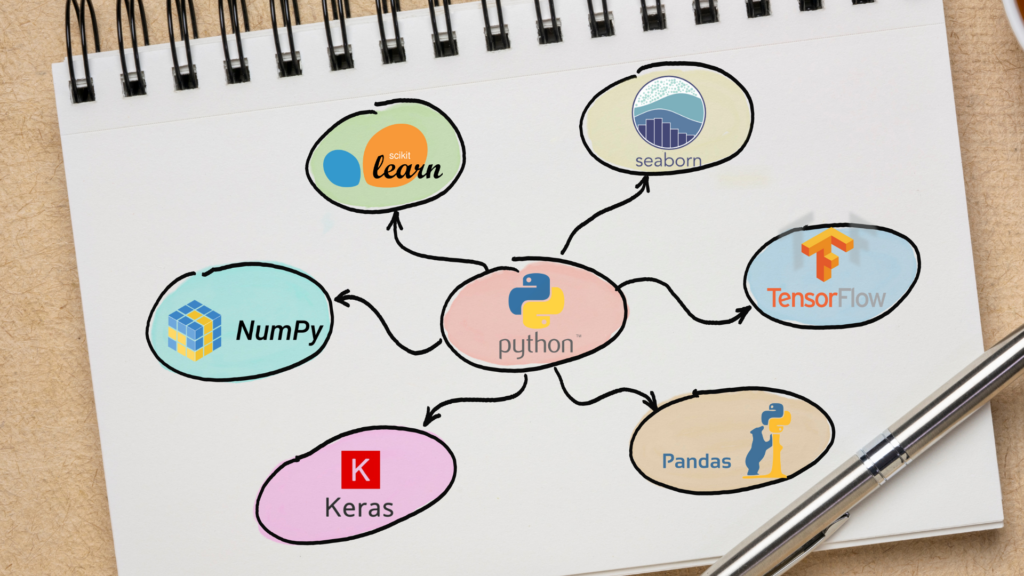

Tools and Libraries for Data Preprocessing

Thankfully, you don’t need to reinvent the wheel in data preprocessing. There are powerful tools and libraries like Pandas and scikit-learn in Python that simplify the process. Let’s say you’re working with a dataset of customer reviews for a product. Using Pandas, you can easily remove duplicates, extract relevant information, and perform sentiment analysis with just a few lines of code. These tools save time and ensure consistency in your data preprocessing efforts.

Data Preprocessing Workflow

To demystify the data preprocessing process, let’s walk through a real-world example. Imagine you’re working with a dataset of customer orders for an e-commerce website. Your data preprocessing workflow might involve cleaning the data by removing duplicate orders and handling missing values by imputing them with the average order value. Then, you might transform categorical data, like product categories, into numerical values using one-hot encoding. This transformed data can then be fed into a machine-learning model to predict future customer behaviour accurately.

Data Preprocessing Best Practices

Success in data preprocessing hinges on following best practices. For instance, always start by understanding your data and its quirks. This helps you tailor your preprocessing steps to the specific dataset. When dealing with text data, consider techniques like tokenisation, stemming, or lemmatisation to extract meaningful information. Additionally, document your preprocessing steps meticulously so others can reproduce your work. Following these best practices ensures your data preprocessing efforts are effective and reproducible.

Impact on Model Performance

Data preprocessing isn’t just a routine; it’s a game-changer. Suppose you’re working on a recommendation system for an e-commerce platform. By preprocessing user data, you can remove irrelevant information, like incomplete user profiles, and normalise user behavior data. As a result, your recommendation system becomes significantly more accurate, leading to increased user engagement and sales. In real-world scenarios, such improvements can translate into millions of dollars in revenue.

Real-Life Examples

Let’s dive into some real-life success stories. Take a healthcare analytics company, for instance. By meticulously preprocessing patient data, they improved the accuracy of their predictive models for disease outbreaks. This allowed healthcare providers to allocate resources more efficiently and save lives. Similarly, a retail giant optimised its supply chain operations by preprocessing supply and demand data. This led to reduced stockouts, lower costs, and happier customers.

In the world of technology, data preprocessing may be the unsung hero, but it’s undeniably essential. Whether you’re training machine learning models, developing APIs, or crafting mobile applications, clean and well-processed data is the foundation of success. It ensures accurate predictions, seamless user experiences, and real-world impact. So, the next time you interact with a smart app or receive a tailored recommendation, remember the silent champion working behind the scenes—data preprocessing.

FAQs

1. What is data preprocessing? Data preprocessing is the process of cleaning, transforming, and organising raw data into a usable format for analysis, machine learning, or application development. For example, it includes removing duplicates, handling missing values, and transforming text data into numerical features.

2. Can you provide an example of how data preprocessing improved machine learning model accuracy? Certainly! In a fraud detection model, data preprocessing involves removing duplicate transactions, handling missing values in transaction records, and normalising transaction amounts. After these steps, the model’s accuracy increased because it focused on relevant data, leading to better fraud detection.

3. How does data preprocessing enhance API performance? APIs rely on well-processed data to provide accurate information. For instance, a financial API processing stock market data might normalise stock prices and filter out erroneous data. This ensures that users receive up-to-date and reliable stock information when querying the API.

4. How can data preprocessing improve the user experience in mobile applications? Consider a navigation app that preprocesses traffic data. Accurate traffic information helps users plan their routes effectively, reducing frustration due to unexpected delays. Users are more likely to trust and continue using such an app.

5. Are there any real-world examples of organisations benefiting from data preprocessing? Absolutely! Healthcare companies have improved disease outbreak predictions, and retail companies have optimised supply chains. For instance, a healthcare analytics firm used data preprocessing to refine patient data, leading to more accurate predictions of disease outbreaks and better resource allocation. Similarly, a retail giant optimised its supply chain operations, reducing stockouts and costs through effective data preprocessing.